- Wget Download List Of Files In Windows 10

- Wget Download List Of Files Download

- Wget Download List Of Files 2017

- Wget Download List Of Files Online

Download ALL Folders, SubFolders, and Files using Wget. Using Wget to download files with a specific name off a site. Download directory & subdirectories via.

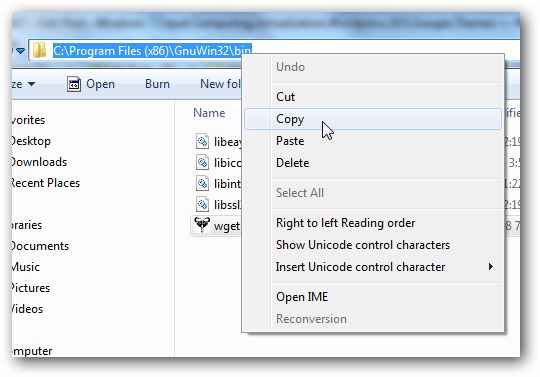

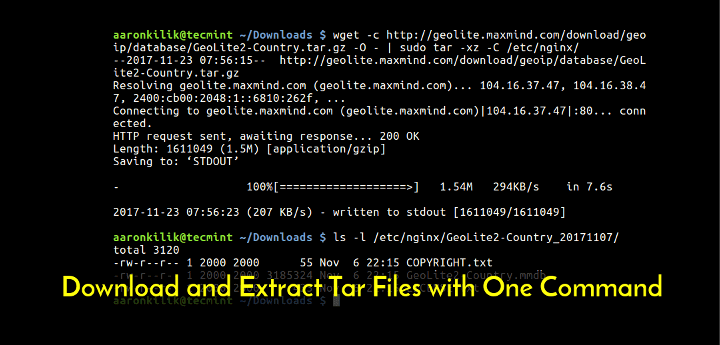

Wget -i path/to/textfile. This will download all of those files one by one and save it in the present working directory. Really good if you already have a list of links to download. Or if you have a set of links to download from on the same page you can use some download plug in like downthemall on firefox. You can use wget to do a lot lot. I want to get a list of files available for download from a usgs.gov site. My thought was to use wget to get a list of files available without actually downloading them. Is there something I am doing that is causing this to take so long? Newer isn’t always better, and the wget command is proof. First released back in 1996, this application is still one of the best download managers on the planet. Whether you want to download a single file, an entire folder, or even mirror an entire website, wget lets you do it with just a few keystrokes. Jump to Automating/scripting download process - #!/bin/sh # wget-list: manage the list of downloaded files # invoke wget-list without arguments while. Wget is a free utility – available for Mac, Windows and Linux (included) – that can help you accomplish all this and more. What makes it different from most download managers is that wget can follow the HTML links on a web page and recursively download the files.

I'm using wget in my centos server to download files through Internet. Sometimes I copy file to server by scp. I'm looking command that shows me list of file that was downloaded recently or even all files. So my question is, how to do this?

cypriancyprian

1 Answer

wget downloads :grep will print all lines containing the word 'wget' from the users .bash_history file..bash_history is a text file with the latest 1000 commands. (Example default settings, can vary.)

See

/home/[user-name]/.bash_historyWget Download List Of Files In Windows 10

Knud LarsenKnud Larsen

Not the answer you're looking for? Browse other questions tagged centoswgetscp or ask your own question.

Wget Download List Of Files Download

I have been using Wget, and I have run across an issue.I have a site,that has several folders and subfolders within the site.I need to download all of the contents within each folder and subfolder.I have tried several methods using Wget, and when i check the completion, all I can see in the folders are an 'index' file. I can click on the index file, and it will take me to the files, but i need the actual files.

does anyone have a command for Wget that i have overlooked, or is there another program i could use to get all of this information?

Wget Download List Of Files 2017

site example:

Sooner or later song. www.mysite.com/Pictures/within the Pictures DIr, there are several folders... 3d furniture models free download for mac.

Wget Download List Of Files Online

www.mysite.com/Pictures/Accounting/

www.mysite.com/Pictures/Managers/North America/California/JoeUser.jpg

I need all files, folders, etc...

Horrid HenryHorrid Henry

3 Answers

I want to assume you've not tried this:

or to retrieve the content, without downloading the 'index.html' files:

Reference: Using wget to recursively fetch a directory with arbitrary files in it

Community♦

Felix ImafidonFelix Imafidon

I use

wget -rkpN -e robots=off http://www.example.com/-r means recursively-k means convert links. So links on the webpage will be localhost instead of example.com/bla-p means get all webpage resources so obtain images and javascript files to make website work properly.-N is to retrieve timestamps so if local files are newer than files on remote website skip them.-e is a flag option it needs to be there for the robots=off to work.Yahoo mail app download.

robots=off means ignore robots file. I also had

-c in this command so if they connection dropped if would continue where it left off from when i re-run the command. I figured -N would go well with -cTim JonasTim Jonas

wget -m -A * -pk -e robots=off www.mysite.com/this will download all type of files locally and point to them from the html file

and it will ignore robots file

and it will ignore robots file

Abdalla Mohamed Aly IbrahimAbdalla Mohamed Aly Ibrahim